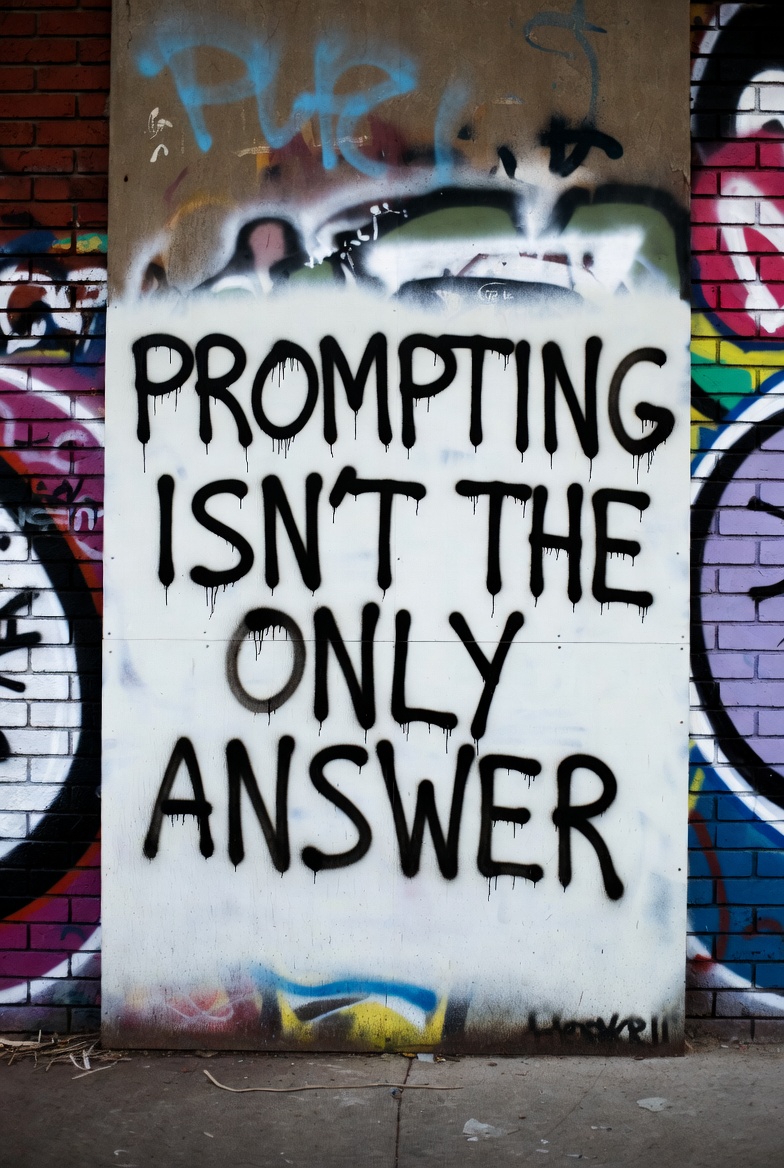

There is a seductive idea circulating in organizations adopting AI: that success lies primarily in crafting better prompts. Master the art of instructing the model, the thinking goes, and you will unlock its potential. Hire a prompt engineer. Build a prompt library. Refine your techniques.

This is not wrong, exactly. Prompt engineering matters. A well-crafted prompt outperforms a careless one. But the emphasis on prompting has become a distraction from the harder, more consequential work that actually determines whether AI initiatives succeed or fail.

Prompt engineering is a tactic. It is not a strategy. And treating it as one helps explain why the vast majority of generative AI pilots fail to deliver measurable business impact.

The uncomfortable truth is this: organizations are not struggling with AI because their prompts are poorly constructed. They are struggling because their data is a mess, their processes have not been redesigned, their governance is absent, and their capabilities have not been built.

In This Post

- The Prompt Engineering Illusion

- What Actually Determines Success

- The Context Engineering Shift

- Why Organizations Get Stuck

- Building Sustainable Capability

- What This Means for Your Organization

The Prompt Engineering Illusion

Prompt engineering emerged from a genuinely useful observation: the way you phrase a request to a large language model significantly affects the quality of the response. Adding context helps. Specifying format helps. Breaking complex tasks into steps helps. These are real techniques that produce real improvements.

But somewhere along the way, this tactical insight got elevated into something it was never meant to be. Organizations began treating prompt engineering as the primary lever for AI success, hiring prompt engineers, building elaborate prompt libraries, and investing in prompt optimization as if this were where competitive advantage lived.

The data tells a different story. Research from Gartner suggests that most AI projects fail to move from pilot to production.[1] When organizations fail to scale AI, the causes are rarely about prompts. The obstacles are data quality, integration complexity, governance gaps, and organizational change.

| Focus Area | What It Improves | What It Doesn't Fix |

|---|---|---|

| Prompt optimization | Output quality for single queries | Bad data, broken processes, missing governance |

| Prompt libraries | Consistency, reuse | Integration complexity, organizational change |

| Better models | Raw capability | Data accessibility, workflow redesign |

| Foundations (data, governance, process) | Scalability, sustainability | Nothing: this is the real work |

An MIT Sloan Management Review analysis found that most enterprise AI failures stem from architectural and governance gaps, not poor models or prompts.[2] A recent industry analysis found that a significant percentage of companies abandoned AI initiatives in 2025, and the pattern was consistent: failures occurred not because the technology was incapable, but because it was deployed on unstable foundations.[3]

Prompt engineering cannot fix bad data. It cannot compensate for missing governance. It cannot redesign workflows or build organizational capabilities. Focusing on prompts while neglecting these foundations is like optimizing the wording of your search queries while your database is corrupted.

What Actually Determines Success

The organizations achieving meaningful results from AI share common characteristics, and prompt sophistication is rarely among them.

Data foundations that work

Every successful AI implementation rests on data that is accurate, accessible, and appropriate for the use case. This sounds obvious, but the gap between aspiration and reality is enormous. Research suggests that only a small fraction of organizations report that all their data is accessible for AI initiatives.[4]

The biggest barriers to AI success are not algorithms but data readiness:

- Data accuracy and bias concerns affect nearly half of organizations

- Insufficient proprietary data limits another large segment

- Governance gaps create compliance and scaling obstacles

The winners are not those with the best prompts. They are those with clean, well-governed data that AI systems can actually use.

Process redesign, not process overlay

Research consistently shows that the largest gains from generative AI come from workflow redesign. Yet only a minority of companies have meaningfully re-engineered even parts of their processes.[5] Most are layering AI onto legacy workflows, which limits impact regardless of how sophisticated the prompting becomes.

An AI assistant that helps with a broken process is still participating in a broken process. The organizations capturing value are those willing to ask: how should this work get done if AI is a participant from the start?

Governance as enablement

The rapid rollout of AI tools has outpaced policies around privacy, intellectual property, and compliance. Industry surveys indicate that a majority of executives cite lack of governance as their primary concern, while only a minority have formal audit processes for AI systems.[6]

Governance is not a constraint on AI success. It is a prerequisite for sustainable deployment. Without clear policies on data usage, model oversight, and decision accountability, organizations cannot scale AI beyond isolated experiments.

Organizational capability, not just technical capability

AI transformation requires skills that many organizations lack: data engineering, ML operations, change management, and domain expertise that can bridge technical possibilities with business needs. The talent shortage is real. And it cannot be solved by hiring prompt engineers while neglecting the deeper capabilities that determine whether AI delivers value.

The Context Engineering Shift

A more sophisticated framing has emerged recently: context engineering. This concept recognizes that AI performance depends not just on how you phrase a question, but on the entire context surrounding that question: the data available, the business rules applicable, the history of prior interactions, the constraints that should apply.[7]

Context engineering requires integration across data architecture, knowledge management systems, and operational platforms. It is not something an AI team solves alone. It requires collaboration between data engineering, enterprise architecture, security, and those who understand processes and strategy.

This framing is useful because it highlights what prompt engineering misses: the work required to make AI systems genuinely context-aware. A prompt can ask an AI to consider certain factors. Context engineering builds the infrastructure that automatically provides those factors, reliably, at scale, integrated with the systems where work actually happens.

The shift is from "how do I ask this AI a question?" to "how do I build systems that continuously supply AI with the right operational context?" That is an architectural question, not a linguistic one.

Why Organizations Get Stuck

Understanding why organizations focus on prompts rather than foundations helps explain the persistent gap between AI investment and AI results.

-

Prompting is visible and immediate. You can write a better prompt in minutes and see improved output instantly. Building data infrastructure takes months. Redesigning processes takes longer. The feedback loop for prompt improvement is tight; the feedback loop for capability building is diffuse and delayed.

-

Prompting feels like progress. Teams can demonstrate prompt improvements in demos and meetings. They can build prompt libraries and count the entries. These are tangible artifacts that suggest momentum. The deeper work of data quality and process redesign is harder to showcase and easier to defer.

-

Prompting is cheaper upfront. A prompt engineer costs less than a data engineering team. A prompt optimization project has a smaller budget than a data governance initiative. The temptation to start with the affordable, visible work is understandable, even when that work cannot deliver the outcomes organizations need.

-

Prompting avoids organizational change. Better prompts do not require anyone to change how they work. They do not challenge existing processes or power structures. They slot neatly into current workflows. The deeper work of AI transformation requires confronting how work gets done, which creates friction that prompt optimization avoids.

These dynamics create a predictable pattern: organizations invest in prompting because it is accessible and visible, defer the harder foundational work, achieve limited results, and conclude that AI does not deliver on its promise. The problem is not AI. The problem is mistaking tactics for strategy.

Building Sustainable Capability

What does it actually look like to build AI capability rather than just AI usage?

Data as strategic asset

Organizations serious about AI treat data quality, accessibility, and governance as strategic priorities rather than technical details. They invest in data engineering, establish clear ownership, and build the infrastructure that makes data AI-ready. This is unglamorous work that rarely makes headlines, but it determines whether AI initiatives have solid foundations or unstable ones.

Architecture for context

Rather than treating each AI application as a standalone project, capable organizations build architectural layers that provide context across applications. Knowledge graphs that capture relationships. Semantic layers that provide consistent business definitions. Integration patterns that connect AI to operational systems. This infrastructure enables AI applications that understand organizational context rather than operating in isolation.

Process transformation, not automation

The goal is not to automate existing processes but to reimagine how work should flow when AI participates. This requires domain expertise, process analysis, and willingness to challenge established patterns. It is harder than automation, and more valuable.

Governance that enables

Effective AI governance answers critical questions: what data can AI access, how are decisions audited, who is accountable for outcomes, how are models monitored for drift and bias. Organizations that treat governance as a checkbox exercise will struggle to scale. Organizations that treat governance as enabling infrastructure will move faster with confidence.

Skills across the organization

AI capability is not solely the province of technical teams. Business leaders need literacy in what AI can and cannot do. Domain experts need ability to guide AI applications toward valuable use cases. Change management skills are essential for adoption. Building these capabilities across the organization, not just in a centralized AI team, determines whether AI becomes embedded in operations or remains a peripheral experiment.

The Measurement Problem

Part of why prompt engineering receives disproportionate attention is that it is easy to measure. You can A/B test prompts. You can track output quality metrics. You can demonstrate improvement.

The foundational work is harder to quantify. How do you measure the value of better data governance? What is the ROI of process redesign before the redesigned process is operating? How do you attribute outcomes to architectural decisions made months earlier?

This measurement difficulty creates organizational dynamics that favor visible, measurable work (prompting) over less visible, harder-to-measure work (foundations). Overcoming this bias requires leadership willing to invest in capabilities whose payoff is diffuse and delayed, trusting that the foundations will enable outcomes that prompt optimization alone cannot achieve.

The organizations that get this right often establish different metrics:

- Data quality scores: measuring foundation health

- Time from pilot to production: measuring scaling capability

- Percentage of AI initiatives reaching scale: measuring sustainable deployment

- Breadth of AI adoption across business functions: measuring organizational capability

These metrics focus on capability building rather than individual prompt performance.

What This Means for Your Organization

If you recognize your organization in this critique, you are not alone. The prompt engineering trap is widespread precisely because it is seductive: a real technique that produces real improvements, elevated to a strategic position it cannot support.

There are some things we can do to redirect toward sustainable capability:

-

Audit honestly. Where are your AI initiatives actually stuck? If the answer involves data access, integration complexity, governance questions, or organizational resistance, prompt optimization will not help. Name the real obstacles.

-

Invest in foundations. Data quality, data governance, and data accessibility are not preliminary work to get out of the way before the real AI work begins. They are the real work. Budget and staff accordingly.

-

Redesign, not just automate. Before deploying AI in a process, ask whether the process itself makes sense. The largest gains come from transformation, not from accelerating dysfunction.

-

Build capability, not just applications. Each AI initiative should leave the organization more capable of the next one. Reusable infrastructure, institutional knowledge, trained people. If every project starts from scratch, you are not building capability.

-

Resist the lure of the visible. Prompt libraries and optimization projects produce tangible artifacts that feel like progress. Do not let their visibility distract from the foundational work that actually determines outcomes.

A Closing Thought

Prompt engineering is a real skill with real value. The people who do it well help organizations extract more value from AI systems. This is not an argument against prompting. It is an argument against prompting as strategy.

The organizations that will lead in AI are not those with the cleverest prompts. They are those that treat AI as a catalyst for transformation rather than a tool to bolt onto existing operations. They invest in data as a strategic asset. They redesign processes rather than automating dysfunction. They build governance that enables scale. They develop capabilities across the organization, not just in technical teams.

This is harder than optimizing prompts. It takes longer. It costs more. It requires confronting organizational challenges that prompt engineering conveniently avoids.

But it is the only path to sustainable AI capability. And in a landscape where most pilots fail to deliver impact, the organizations that build real foundations will be the ones still standing when the prompt engineering hype fades.

The question is not whether you have good prompts. The question is whether you have the foundations to make AI work at scale. Everything else is optimization at the margins.

This is the tenth in our January series on data and AI strategy for 2026. Subscribe to receive the full series as it publishes throughout the month.

Sources

-

Gartner research on AI project success rates. Most AI projects fail to move from pilot to production. gartner.com

-

MIT Sloan Management Review, "Avoid ML Failures by Asking the Right Questions" (2024). Analysis emphasizing architectural, governance, and organizational factors. sloanreview.mit.edu

-

RAND Corporation, "Root Causes of Failure for AI Projects" (2024). Research on AI initiative abandonment patterns. rand.org

-

BCG & MIT Sloan Management Review, "Where's the Value in AI?" (2024). Research on data readiness barriers to AI success. bcg.com

-

McKinsey Global Institute, "The State of AI" (2024). Research on AI adoption patterns and process re-engineering rates. mckinsey.com

-

PwC, "AI Business Survey" (2024). Executive perspectives on AI governance and deployment challenges. pwc.com

-

"Context engineering" is an emerging concept in AI implementation, emphasizing architectural approaches to providing AI with operational context beyond prompt optimization.