One agent is a tool. Multiple agents are a team. And teams need management.

The moment you go from a single AI agent to several working in parallel, you inherit every coordination problem that's ever existed in project management, except your team members have no memory of each other, no shared awareness, and no instinct to stop when something's off. Most of the tooling available today was built for the single-agent world. The multi-agent world needs something different.

We hit that wall. So we built the missing layer.

The Shift from Single Agent to Agent Team

The industry is moving fast on this front, and we've been paying attention. Anthropic released Agent Teams as part of Claude Code[1], giving developers the ability to coordinate multiple Claude instances working in parallel on shared codebases. Instead of one agent grinding through problems sequentially, you can now have a lead agent delegating subtasks to specialized teammates, each operating in its own context window and communicating directly with the others. It's orchestrated work, not just parallel execution.

On the open source side, OpenClaw has emerged as one of the notable projects in AI infrastructure[2]. It's a self-hosted gateway that sits between language models, messaging platforms, tools, and long-term memory. The community around it was already orchestrating multi-agent sessions with custom skills before Anthropic shipped native support. The convergence is clear: the tools for running agent teams are here. What's been missing is the operational layer on top.

That's what Agent Commander is.

Why Chat Isn't Enough

When you run agent teams, whether through Claude Code's native coordination or through platforms like OpenClaw, the chat interface becomes a bottleneck. You need to see what each agent is doing right now, not after it finishes. You need to inspect what an agent knows and correct it when it's working from bad assumptions. You need approval gates for high-stakes operations. You need cost visibility across agents, tasks, and sessions. And you need the ability to replay what happened when something goes wrong.

Chat is synchronous and linear. Agent teams are concurrent and stateful. The tooling has to match the problem.

What We Built

Agent Commander is a web-based control plane for orchestrating teams of AI agents. It gives us real-time visibility into everything our agents are doing, and it gives us the controls to intervene when we need to.

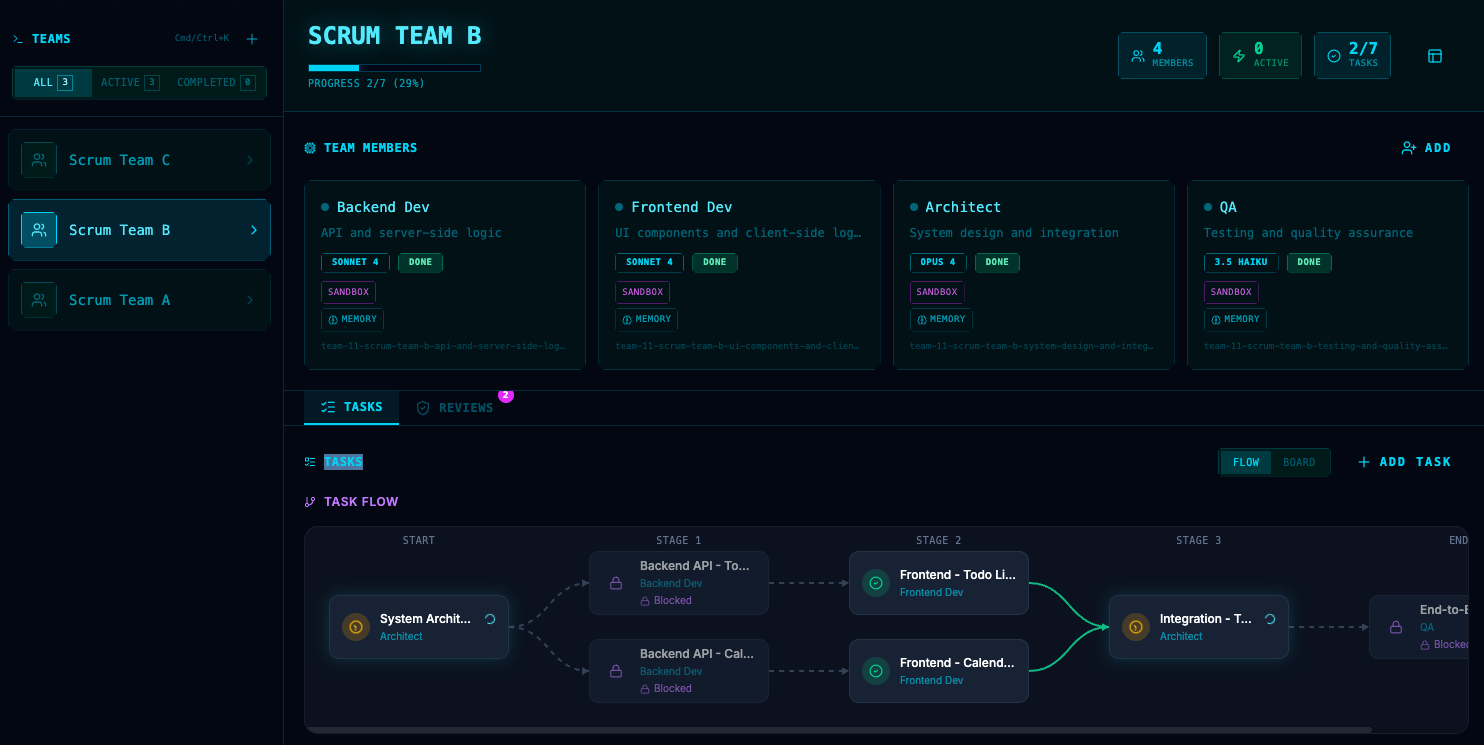

The system has twelve purpose-built panels, each solving a specific operational problem.

Team management lets us configure which agents are in play, what roles they're filling, and which models they're routing through. Task management shows us the work in both list and graph views, so we can see dependencies, handoffs, and bottlenecks at a glance. For parallel agent work, the graph view is essential. You can't reason about concurrent execution in a linear list.

Real-time log streaming is probably the most valuable piece. We can watch an agent think, write code, hit an error, and retry, all as it happens. Before we had this, debugging meant digging through log files after the fact. Now we usually know what went wrong before the agent is done failing.

Memory inspection lets us see exactly what an agent knows. When an agent goes sideways, nine times out of ten it's a memory problem. It learned something incorrect or it's missing context. Being able to browse, search, and edit an agent's memory directly saves hours of guessing and restarting.

The review panel is our human-in-the-loop checkpoint. When an agent reaches a decision point that requires approval, like deploying to production, sending a message, or deleting files, it pauses and surfaces a review card with context, the proposed action, and the consequences. We approve or reject. The agent continues or replans. If you're running agents on anything that matters, you need this.

And the usage dashboard keeps cost visible. It shows spend by agent, by model, by task, by day. If you're not tracking this, you will be surprised by your bill. We found agents burning 10x what they should on certain tasks. Visibility was the fix.

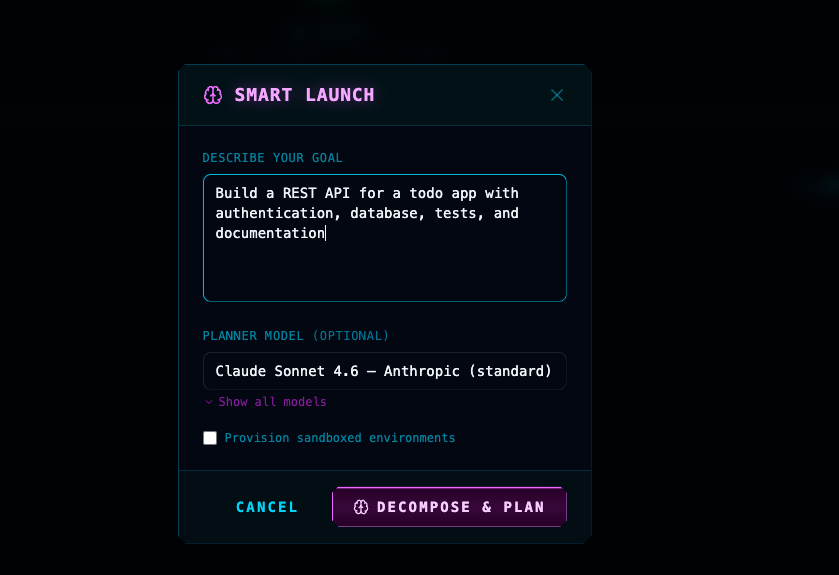

Smart Launch: From Goal to Team Plan

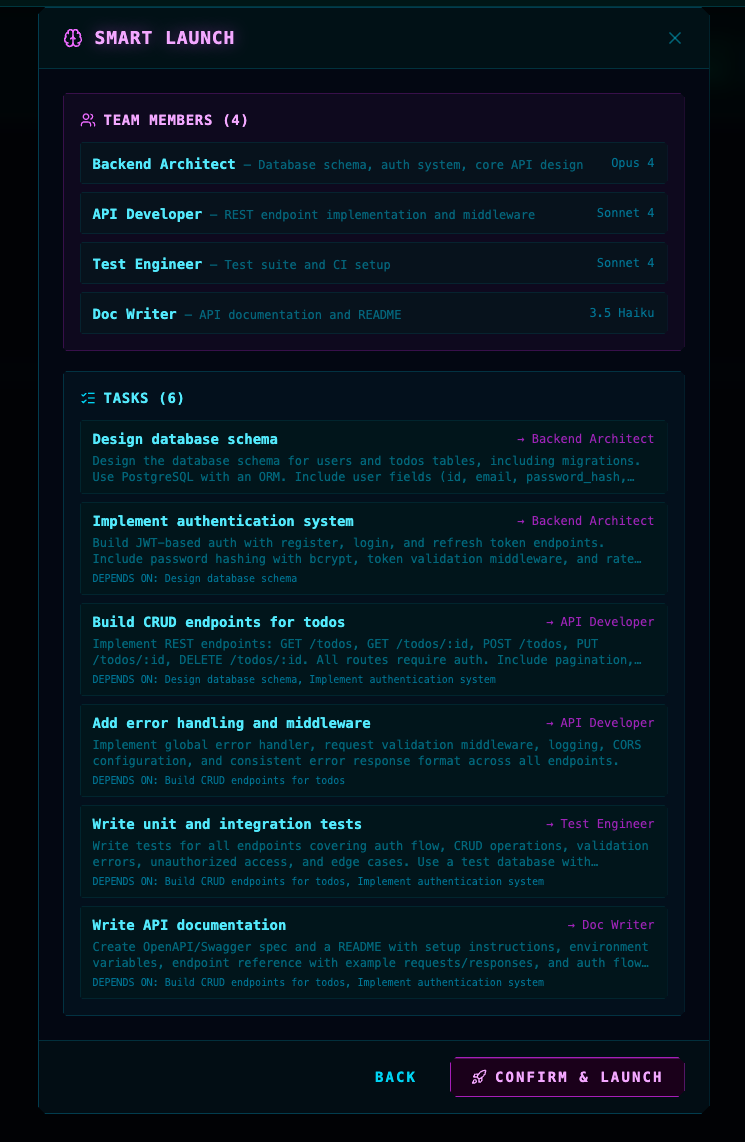

One of the capabilities we're most excited about is Smart Launch. Instead of manually configuring agents and decomposing work, you describe your goal in plain language and select a planner model. Agent Commander decomposes that goal into a team of specialized agents with appropriate model assignments, then generates a full task plan with dependencies.

In the example above, a single prompt to "Build a REST API for a todo app with authentication, database, tests, and documentation" produces a four-member team: a Backend Architect on Opus 4, an API Developer and Test Engineer on Sonnet 4, and a Doc Writer on 3.5 Haiku. Each task has explicit dependencies, so the system knows what can run in parallel and what must wait.

This turns minutes of manual setup into a single conversation turn. And because the decomposition is visible before launch, you can review, adjust, and approve before any agent starts working.

Why This Matters for Our Clients

At Semper AI, we don't just talk about AI. We build with it. We run agent teams on real work, including our own internal operations and client engagements. Agent Commander is part of how we do that.

This is the same philosophy we bring to every client engagement. Before you deploy AI, you need the foundation to support it. Before you scale agents, you need the infrastructure to observe, coordinate, and control them. The tools are advancing fast. Anthropic's Agent Teams feature turns Claude Code into something closer to an AI development team than a coding assistant. OpenClaw gives you a self-hosted gateway that connects models to everything you use. But tools without operational discipline are just expensive experiments.

We've invested in building that operational layer because it's what separates teams that are experimenting with agents from teams that are running them in production. The chat interface got us started. The control plane is what makes agent work sustainable.

If you're exploring how agent teams could work for your organization, or if you've already started and you're hitting the same walls, we should talk.

Sources

-

Anthropic, "Introducing Claude Code and the Claude Code SDK" (2025). anthropic.com

-

OpenClaw, "OpenClaw: Personal AI Assistant" (2025). openclaw.ai

Semper AI is a veteran-owned data and AI agency that helps businesses build solid foundations before implementing AI solutions. We don't just advise on AI. We build with it every day.